Probability Generating Functions — OCR A-Level Study Guide

Exam Board: OCR | Level: A-Level

Master one of the most powerful tools in A-Level Further Maths. This guide breaks down Probability Generating Functions (PGFs), showing you how to encode entire distributions into a single function, then differentiate to find the mean and variance. It’s your key to unlocking top marks in OCR exam questions on discrete distributions.

## Overview

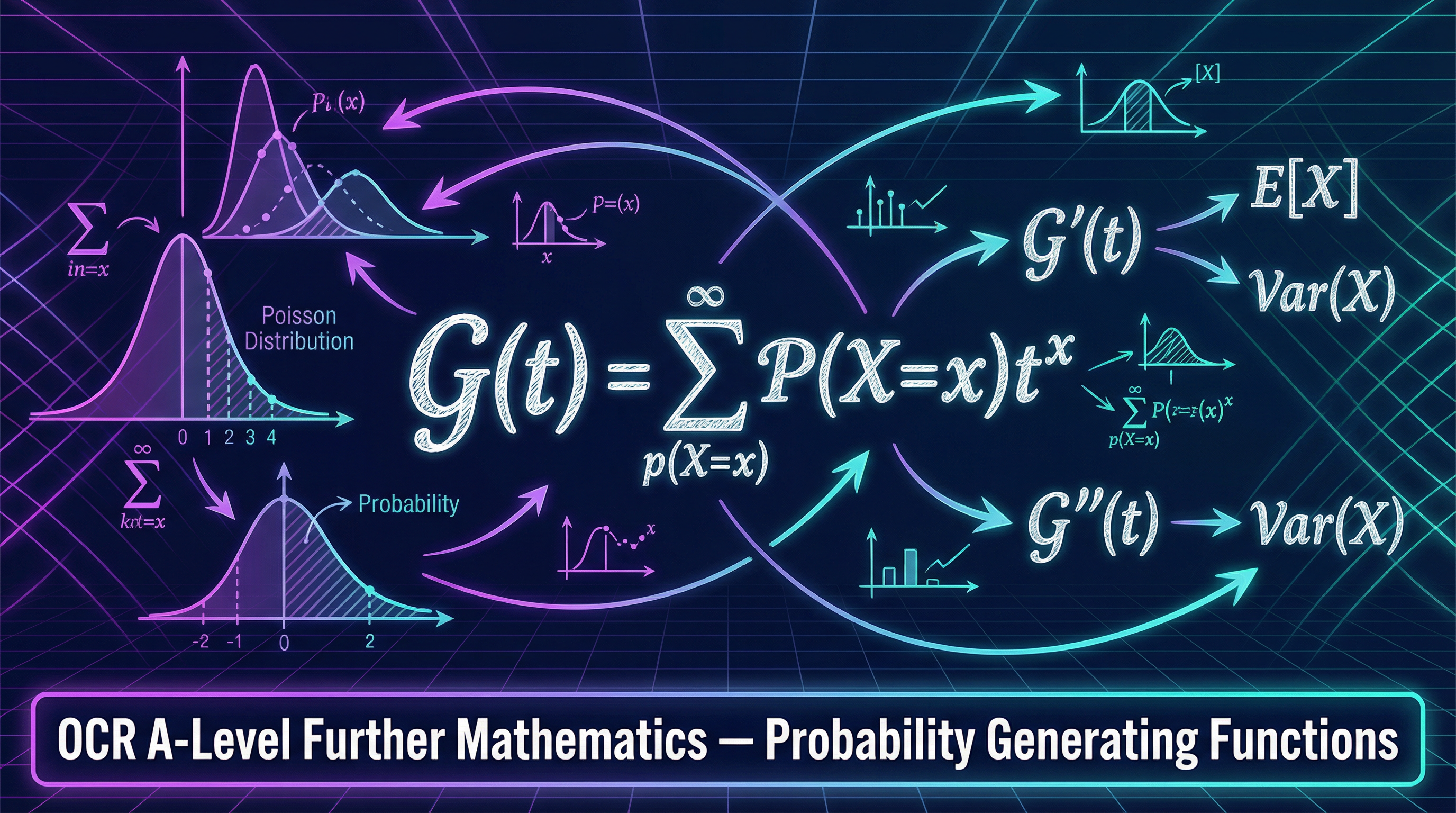

Probability Generating Functions (PGFs) are a cornerstone of advanced probability theory, covered in section 3.6 of the OCR A-Level Further Mathematics specification. A PGF is a sophisticated way to represent a discrete probability distribution. Instead of working with a table of probabilities, we encode the entire distribution into a single polynomial or power series, G(t). The magic of PGFs lies in their ability to simplify complex calculations. By differentiating G(t) and evaluating it at t=1, candidates can swiftly calculate the mean and variance of the distribution. Furthermore, PGFs provide an elegant method for finding the distribution of the sum of independent random variables using the convolution theorem. Exam questions typically require candidates to derive PGFs for standard distributions (like Binomial, Poisson, and Geometric), use them to find moments, and apply the convolution theorem to solve problems. Mastery of PGFs demonstrates a deep understanding of the algebraic structure of probability, a skill highly rewarded by examiners.

## Key Concepts

### Concept 1: The Definition and Purpose of a PGF

A Probability Generating Function, G(t), is a power series that ‘encodes’ the probability mass function (PMF) of a discrete random variable X. It is defined as:

**G(t) = E(tˣ) = Σ P(X=x) * tˣ**

Here, the summation is over all possible values, x, that the random variable X can take. The variable ‘t’ is a dummy variable, a placeholder that allows us to create this function. Think of it as a clothes hanger: the hanger (t) isn't the important part; it's the clothes (the probabilities and values of X) that it holds in a structured way. The primary purpose is to transform a sequence of probabilities into a single, manageable function. A crucial property, and a key exam check, is that **G(1) = 1**, because substituting t=1 reduces the sum to Σ P(X=x), which is the sum of all probabilities and must equal 1.

**Example**: A biased coin shows heads with probability p=1/3. Let X=1 for heads and X=0 for tails. The PMF is P(X=1)=1/3 and P(X=0)=2/3. The PGF is:

G(t) = P(X=0)t⁰ + P(X=1)t¹ = (2/3) * 1 + (1/3) * t = **(2+t)/3**.

### Concept 2: Extracting Moments (Mean and Variance)

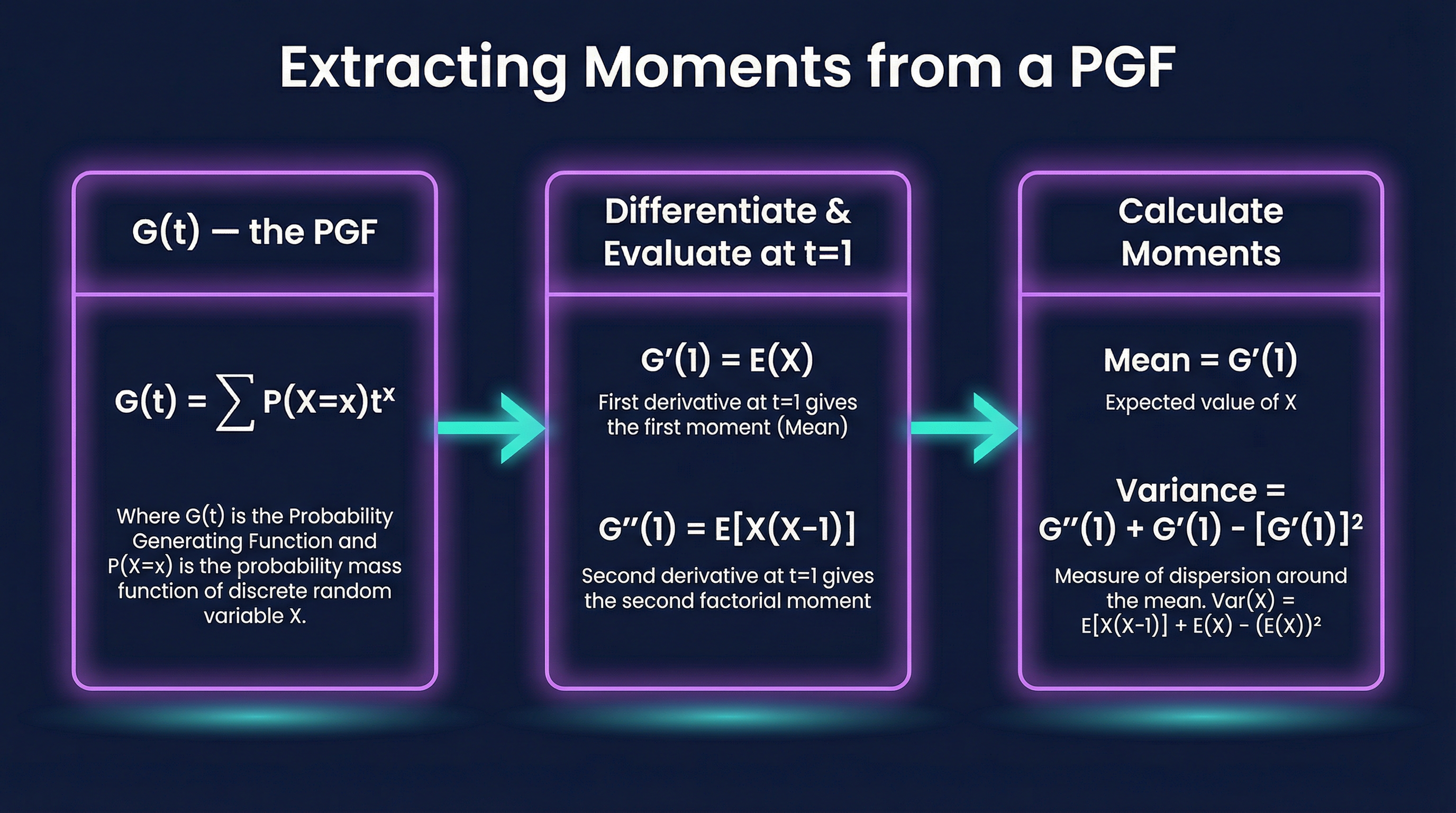

This is the most common application of PGFs in exams. By differentiating G(t) with respect to t and evaluating at t=1, we can find the moments of the distribution.

- **The Mean (Expected Value)**: The first derivative gives the mean.

**E(X) = G'(1)**

- **The Variance**: This requires the first and second derivatives.

First, the second derivative gives the *second factorial moment*: **E[X(X-1)] = G''(1)**. This is a very common point of error; G''(1) is NOT E(X²). From this, we find Var(X) using the formula:

**Var(X) = G''(1) + G'(1) - [G'(1)]²**

Credit is often awarded for explicitly stating this variance formula before substitution.

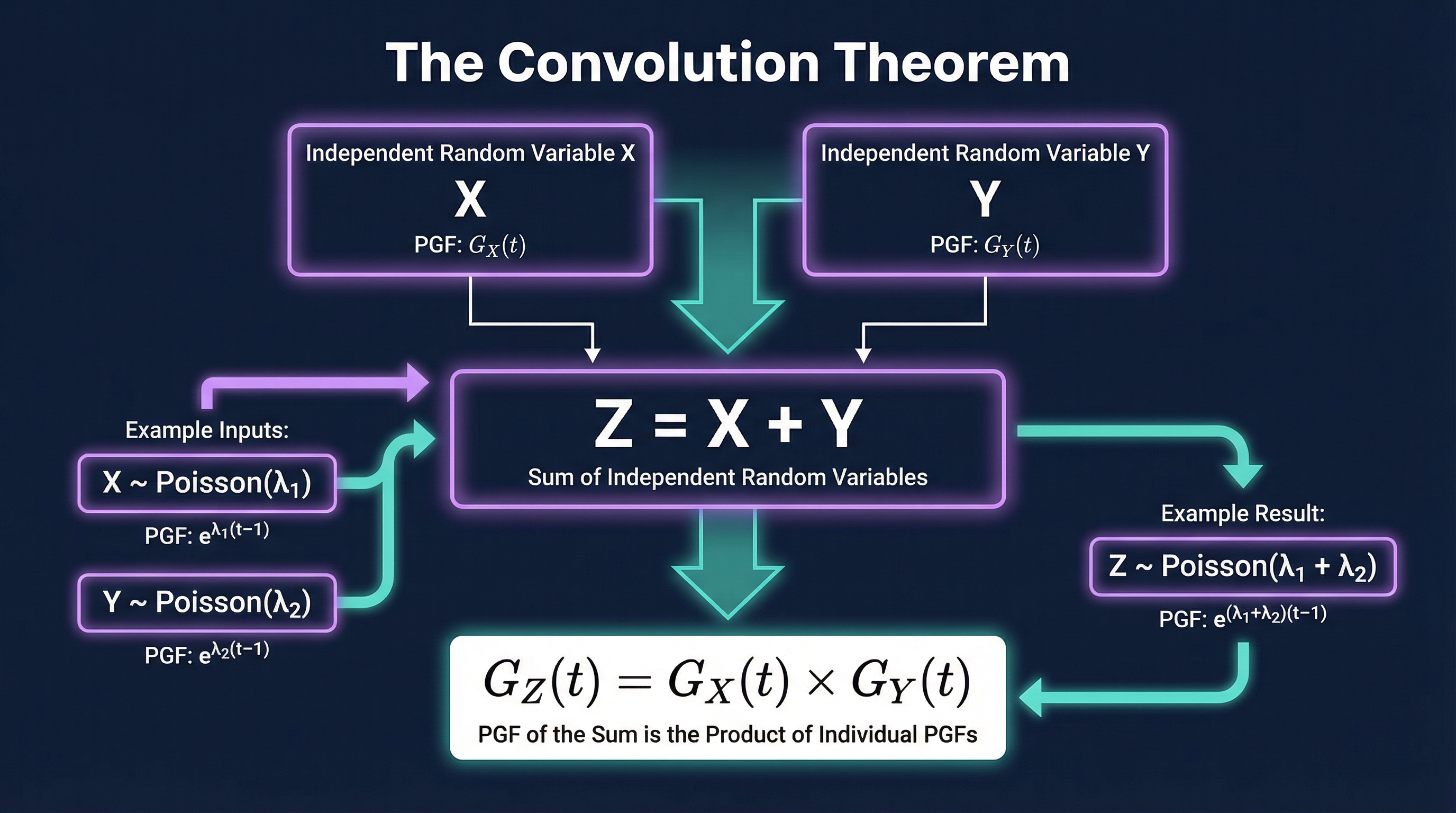

### Concept 3: The Convolution Theorem

This theorem is used for finding the distribution of the sum of two or more *independent* random variables. If Z = X + Y, where X and Y are independent, the PGF of Z is simply the product of the PGFs of X and Y.

**G_Z(t) = G_X(t) * G_Y(t)**

This is a powerful shortcut. For example, if you have two independent Poisson variables, X ~ Po(λ₁) and Y ~ Po(λ₂), you can find the distribution of their sum Z = X + Y by multiplying their PGFs. The result is the PGF for a Po(λ₁ + λ₂) distribution, saving you a much more complex convolution calculation.

## Mathematical Relationships

Below are the key formulas and PGFs for standard distributions. Candidates should be able to derive these but are strongly advised to memorise them for exam efficiency.

| Distribution | PMF: P(X=x) | PGF: G(t) | Mean E(X) | Variance Var(X) | Status |

| :--- | :--- | :--- | :--- | :--- | :--- |

| **Bernoulli(p)** | p for x=1, q for x=0 | `q + pt` | `p` | `pq` | Must memorise |

| **Binomial(n,p)** | `(nCx) pˣ qⁿ⁻ˣ` | `(q + pt)ⁿ` | `np` | `npq` | Must memorise |

| **Poisson(λ)** | `e⁻ˡ λˣ / x!` | `e^(λ(t-1))` | `λ` | `λ` | Must memorise |

| **Geometric(p)** | `qˣ⁻¹ p` (for x=1,2,...) | `pt / (1-qt)` | `1/p` | `q/p²` | Must memorise |

| **Negative Binomial(r,p)** | `(x-1Cr-1) pʳ qˣ⁻ʳ` | `(pt / (1-qt))ʳ` | `r/p` | `rq/p²` | Given on formula sheet |

**Key Moment Formulas:**

- **E(X) = G'(1)** (Must memorise)

- **Var(X) = G''(1) + G'(1) - [G'(1)]²** (Must memorise)

**Key Transformation Formula:**

- For Z = aX + b, **G_Z(t) = tᵇ * G_X(tᵃ)** (Must memorise)

## Practical Applications

While PGFs are largely a theoretical tool in A-Level, they have significant real-world applications in fields that model discrete events, particularly where sums of variables are involved.

- **Queueing Theory**: In call centres or network traffic analysis, the number of arrivals in a given interval might be modelled by a Poisson distribution. PGFs can be used to analyse the total number of arrivals over several intervals or the properties of waiting times.

- **Genetics**: The number of offspring carrying a certain gene can be modelled as a random variable. PGFs are used in branching processes to model population growth over generations, calculating the probability of eventual extinction or survival of a genetic line.

- **Insurance Risk**: An insurance company might model the number of claims for different policy types using different distributions. PGFs allow them to combine these to find the distribution of the total number of claims, which is crucial for calculating capital reserves.