Study Notes

Overview

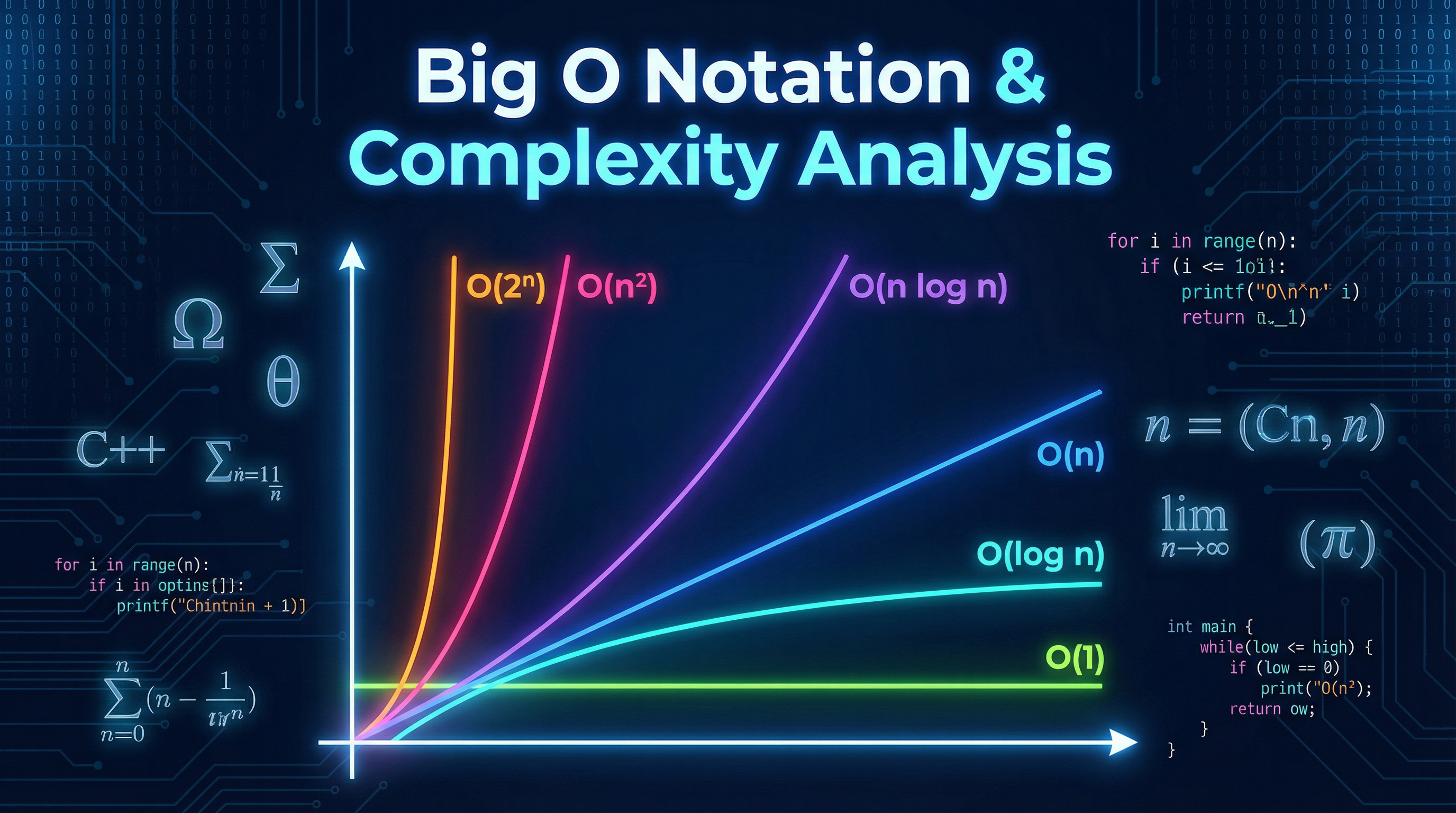

Big O notation is the system we use in computer science to describe an algorithm's efficiency in terms of how its running time or memory usage grows with the size of the input. For your Edexcel A-Level exam, this isn't just about memorising a few terms; it's about deep analysis. You'll be expected to look at a piece of pseudocode and derive its time and space complexity, often considering best, average, and worst-case scenarios. This topic, specification reference A3.5, is fundamental because it's how we make informed decisions about which algorithm to use when solving a problem. A solution that's fast for 10 items might be disastrous for 10 million, and Big O gives us the language to predict this. Examiners will test your ability to apply this knowledge (AO2) by asking you to compare algorithms and justify your reasoning with precise, evidence-based statements about their growth rates.

Key Concepts

Concept 1: Asymptotic Analysis & The Dominant Term

Big O is concerned with asymptotic analysis. This means we only care about how an algorithm performs as the input size, n, tends towards infinity. When analysing an algorithm, you might derive a precise formula for the number of operations, like 3n² + 5n + 12. However, for Big O, we simplify this by taking the dominant term (the term that grows fastest) and ignoring any coefficients. In this case, n² grows much faster than n, so the complexity is O(n²). The 3, 5n, and 12 become insignificant as n gets very large. This is a core marking point: correctly identifying the dominant term is the first step to earning credit.

Example: An algorithm performs 4n³ + 20n² + 100 operations. The dominant term is n³. Therefore, the time complexity is O(n³).

Concept 2: Time vs. Space Complexity

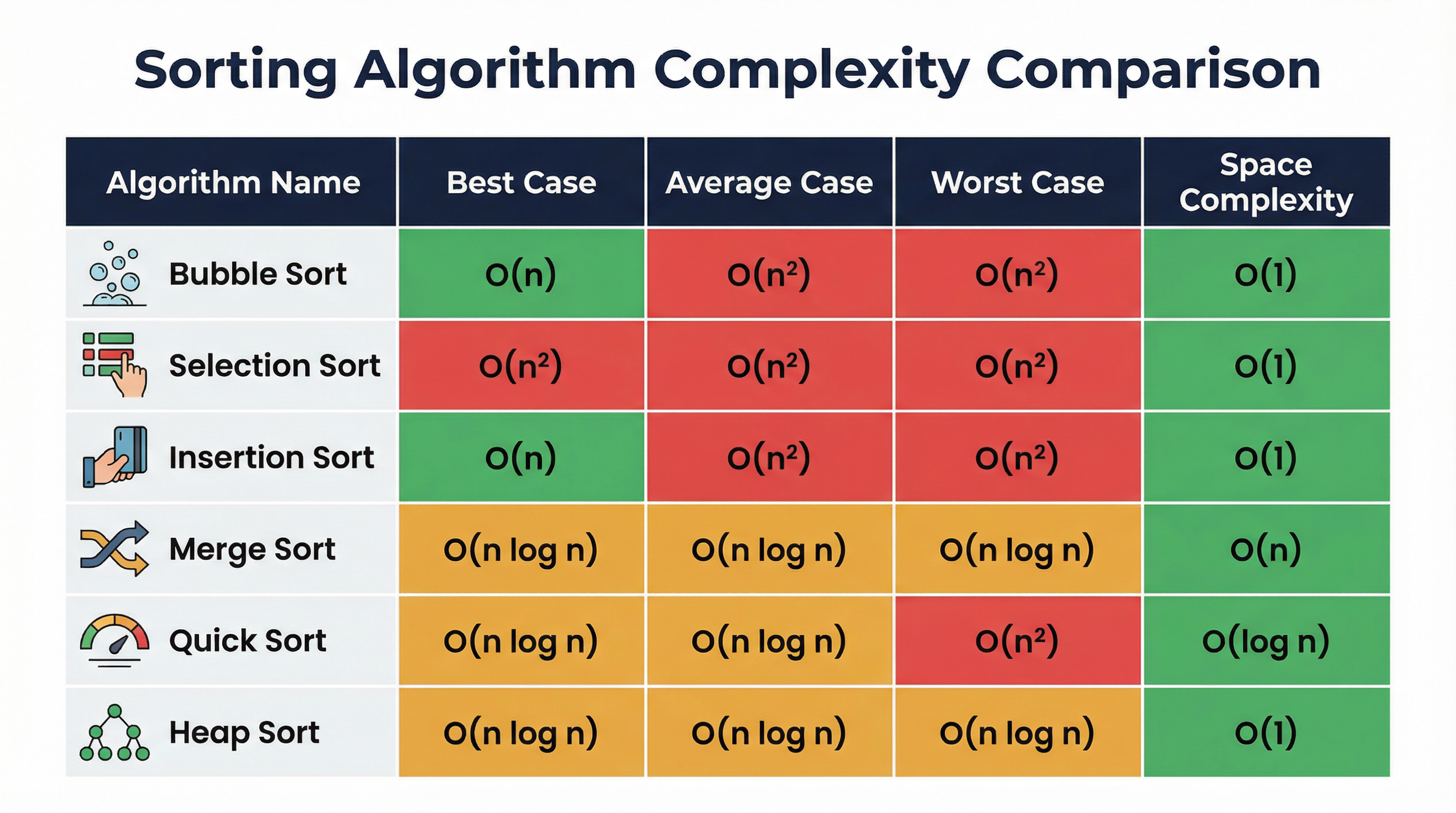

It's crucial to distinguish between these two types of efficiency. Examiners can ask about either, and confusing them is a common mistake.

- Time Complexity: Refers to the number of operations or CPU cycles an algorithm takes to complete. This is what we usually mean when we talk about Big O. It's a measure of how long the algorithm will run.

- Space Complexity: Refers to the amount of memory (RAM) an algorithm requires. This includes the space for the inputs themselves, plus any auxiliary or temporary data structures created, including the depth of the recursion stack.

Example: A linear search through an array of size n has a time complexity of O(n). Its space complexity is O(1) because it only needs a few variables (the loop counter, the target value) regardless of the array's size. In contrast, Merge Sort has a time complexity of O(n log n) but a space complexity of O(n) because it needs to create new arrays to hold the sorted halves.

Concept 3: Common Complexity Classes

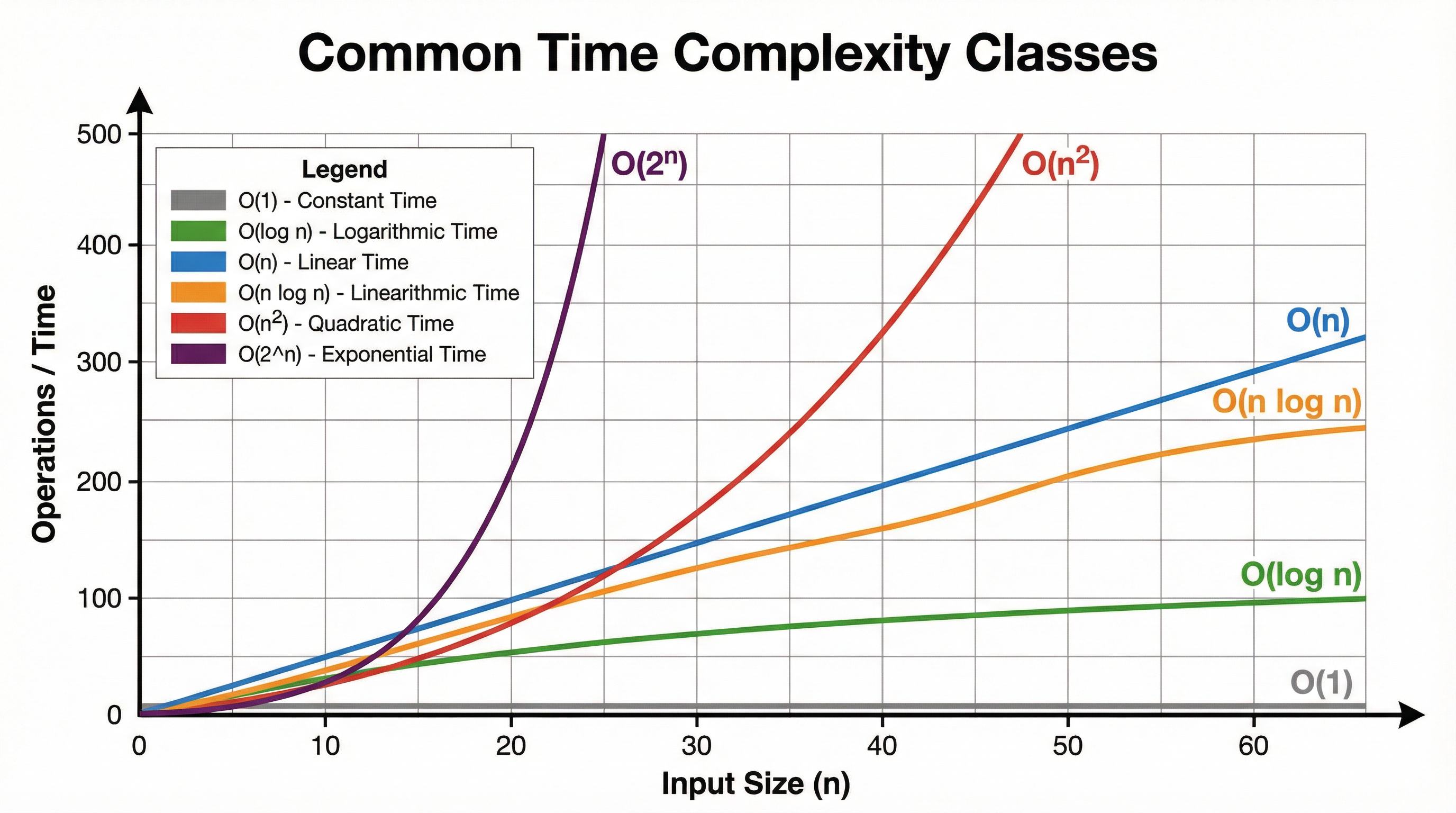

You must be able to identify and compare the following complexity classes. They are listed here from most efficient (best) to least efficient (worst).

- O(1) - Constant: The execution time is independent of the input size. Example: Accessing an array element at a given index.

- O(log n) - Logarithmic: The time increases with the log of the input size. Typically found in algorithms that halve the dataset in each step. Example: Binary Search.

- O(n) - Linear: The time is directly proportional to the input size. Example: Iterating through a list once.

- O(n log n) - Linearithmic: A very common complexity for efficient sorting algorithms. Example: Merge Sort, Heap Sort.

- O(n²) - Quadratic: Time is proportional to the square of the input size. Often involves nested loops over the dataset. Example: Bubble Sort, Insertion Sort.

- O(2ⁿ) - Exponential: Time doubles with each addition to the input size. These algorithms are only practical for very small n. Example: Brute-force solutions to the Travelling Salesman Problem.

Mathematical/Scientific Relationships

The most important mathematical relationship to understand is the growth rate of these functions. For large n:

O(1) < O(log n) < O(n) < O(n log n) < O(n²) < O(n³) < O(2ⁿ)

Another key relationship is the summation of an arithmetic series, which often appears in nested loop analysis:

1 + 2 + 3 + ... + n = n(n+1)/2

When you see this pattern, you should immediately recognise that the complexity is O(n²), because the dominant term in n(n+1)/2 is n²/2, and we discard the coefficient.

Practical Applications

Big O isn't just theoretical. It dictates real-world system performance.

- Database Indexing: Databases use B-Tree data structures, which allow for searching, insertion, and deletion in O(log n) time. Without this, searching a database with billions of records would be impossibly slow.

- Sorting Large Datasets: When a social media site sorts your feed, it uses an efficient algorithm like Merge Sort or a hybrid (Introsort), which works in O(n log n) time. Using Bubble Sort (O(n²)) would make the user experience terrible.

- Graph Traversal in GPS Navigation: GPS systems like Google Maps use algorithms like Dijkstra's or A* to find the shortest path. The efficiency of these algorithms (often related to O(E + V log V), where E is edges and V is vertices) is what allows them to calculate routes across a vast road network in seconds.

Visual Resources

2 diagrams and illustrations

Interactive Diagrams

2 interactive diagrams to visualise key concepts

Flowchart for the Bubble Sort algorithm, illustrating the nested loop structure that leads to its O(n²) complexity.

Flowchart for the Merge Sort algorithm, illustrating the 'divide and conquer' approach that results in O(n log n) complexity.

Worked Examples

3 detailed examples with solutions and examiner commentary

Practice Questions

Test your understanding — click to reveal model answers

What is the time complexity of accessing the 5th element in an array of size 10,000? Justify your answer. (2 marks)

Hint: Does the size of the array affect how long it takes to find an element if you already know its index?

An algorithm has a time complexity of O(n³). If it takes 2 seconds to run for an input of size n=100, estimate how long it will take for an input of size n=200. (3 marks)

Hint: How does the number of operations change when you double the input size for a cubic complexity algorithm?

A developer uses a recursive function to calculate the nth Fibonacci number. Analyse the time complexity of this naive recursive approach. (4 marks)

Hint: Draw the recursion tree for fib(5). How many times are fib(2) and fib(1) calculated? How does the tree grow?

Why is it important to consider the worst-case complexity of an algorithm? Give an example. (3 marks)

Hint: Think about applications where performance guarantees are critical.

Explain why an algorithm with O(n) space complexity may be unsuitable for an embedded system. (2 marks)

Hint: What are the typical constraints of embedded systems?